SEO sin filtros. Artículos directos del equipo SEOCOM

Proyectos reales desde 2008 condensados en análisis, guías y aprendizajes honestos. Sin recetas mágicas, sin contenido genérico.

-

SEO técnico

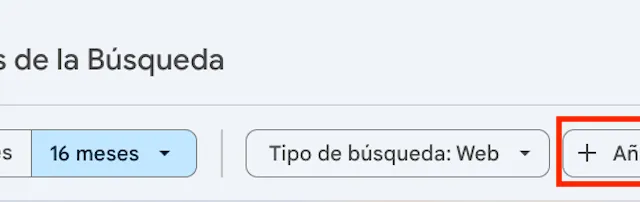

SEO técnicoRegEx para SEO: guía práctica con GSC y GA4 (2026)

Aprende a usar expresiones regulares en GSC y GA4: filtros prácticos para URLs, consultas, segmentación por tipología y análisis avanzado para SEO.

- GEO e IA

Qué es GEO y por qué tu marca tiene que aparecer en ChatGPT

El 88% de empresas españolas no tiene estrategia GEO. Mientras tanto, ChatGPT recomienda a tu competencia. Esta guía explica qué es GEO, por qué importa y qué hacer hoy.

- SEO técnico

El 80% de las auditorías SEO no sirven para nada. Esta es la razón

PDF de 150 páginas. 1.247 hallazgos sin priorizar. Recomendaciones genéricas. 6 meses después nada se ha implementado. El problema no es de tu equipo.

- SEO técnico

Migraciones SEO: 10 errores críticos que destruyen años de trabajo en una noche

Cambio de plataforma el viernes. Pérdida del 60% de tráfico el lunes. Estos son los 10 errores que más hemos visto arruinar años de trabajo SEO en una sola noche.

- SEO técnico

Core Web Vitals en 2026: INP ha cambiado las reglas del juego

INP reemplazó a FID como Core Web Vital crítico. El 40% de las webs que pasaban FID no pasan INP. Esto es lo que cambia y cómo arreglarlo.

- Link building

Cómo NO hacemos link building en SEOCOM (y por qué el 80% de agencias te penalizará)

Si otra agencia te ofrece link building a 30€ por enlace, sal corriendo. Esta es la lista completa de lo que NO hacemos en SEOCOM y por qué.

- SEO local

SEO local multisede: qué cambia cuando tienes 50 Google Business Profiles

Con 1 Google Business Profile todo es sencillo. Con 50, la estructura, la gestión de reseñas y la coherencia NAP se vuelven el 80% del trabajo SEO local.

-

Contenidos y editorial

Contenidos y editorialBrand storytelling: cómo combinar copywriting y narrativa para construir una marca que conecta

Te ha pasado: publicas, escribes, lanzas campañas… pero algo no termina de encajar. El mensaje está ahí, las palabras también, pero no generan lo que esperabas: conexión.

-

Contenidos y editorial

Contenidos y editorial¿Qué es el análisis CAME y cómo convertir tu DAFO en una estrategia sólida para tu negocio?

El análisis CAME es una herramienta estratégica que permite transformar el diagnóstico realizado mediante el análisis DAFO en acciones concretas para mejorar la competitividad de…

-

SEO técnico

SEO técnicoObjetivos SEO medibles: claves para evaluar el rendimiento de tu web

Una de las primeras preguntas que debemos hacernos al principio de un proyecto es: ¿cómo mido el éxito de mi proyecto? Para responder a esta pregunta, una de las primeras tareas…

-

Contenidos y editorial

Contenidos y editorialQué es el marketing ético y cómo usarlo para construir una marca con propósito (y con resultados)

En los últimos años el marketing ha dejado de ser lineal para convertirse en un ecosistema. ¿Qué quiere decir esto? Significa que el marketing ha pasado de ser un altavoz de…

-

SEO técnico

SEO técnicoCómo implementar el lazy loading correctamente en imágenes y vídeos

El lazy loading es una técnica básica para optimizar la velocidad de carga y mejorar la experiencia de usuario en sitios web, sobre todo cuando estas contienen muchas imágenes y…

¿Hablamos sobre tu proyecto?

30 minutos. Sin compromiso. Te diremos con transparencia qué puedes esperar de un trabajo SEO bien hecho.

Sin compromiso.